|

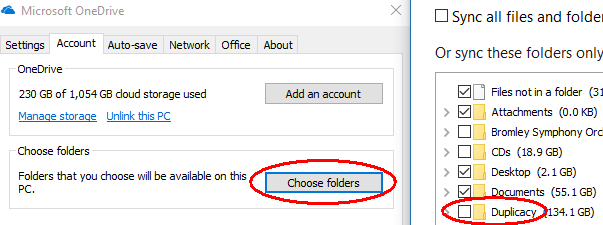

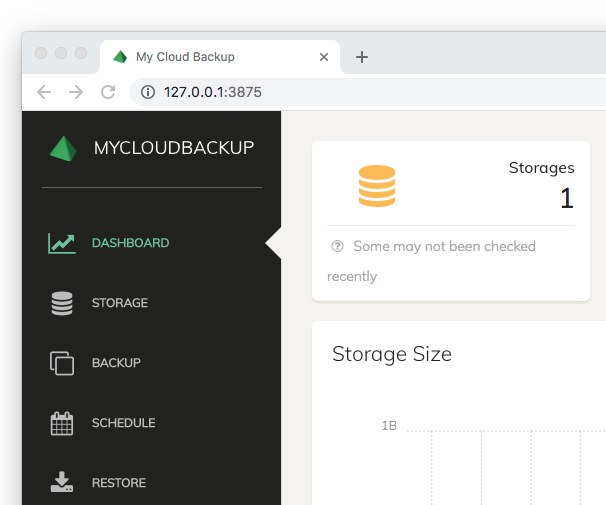

Note: If 2 (n) duplicates are found, you can make a decision ( Delete/ Not a duplicate) on only 1 (n-1) and the other will be moved to resolved automatically. Auto-resolver enables users to resolve duplicates with high confidence percentage in one click, speeding up the process of De-duplication and saving you time. In addition, Rayyan now offers subscribers the Auto-resolver feature for a large dataset of articles to De-duplicate. Compare the detected duplicates and click Delete if the references were duplicates, or Not duplicate otherwise. A confidence percentage will be displayed for each. The detected duplicates will be listed on top of each other in the bottom section. Click on the record you wish to resolve.Ħ. Under Possible Duplicates, click Unresolved to filter by unresolved duplicates.Ĥ. You will also see the Possible Duplicates facet in the workbench at the left (you might need to scroll down as the box might be added at the bottom of the workbench).ģ. Once duplicate detection is finished, you will see the message: x duplicates found. Please continue to the next step to resolve the duplicates. If you see the message: A search must be added or deleted before running duplicate detection again, this means that you have already run duplicate detection on your dataset. If you wish to run it again, you will have to add or delete references/articles from your review first. Note: Detecting duplicates can be performed once on each unique dataset in a review. You will see the message: Duplicate detection is running, please wait. To Detect duplicates in Rayyan, follow the steps below: Rayyan was ranked number one for its combination of accuracy and sensitivity for deduplication in an independent third-party study. Rayyan then offers duplicate resolution for statistically likely duplicates by comparing the title, author, journal and year. Duplicacy's forums are also super helpful and I've been a part of a few conversations that resulted in improvements to the platform.Rayyan automatically detects and resolves 100% duplicate articles from searches. Been lurking on these forums for more info on people's experiences!ĮDIT: I should also mention that if you compare the forums of Duplicacy and Duplicati, you'll notice the former is mostly about how to use and improve the product and the latter is often about people in trouble, often with corrupt backups, lost data, etc., pleading for help. I'm in the process of migrating to UNRAID so seeing how Duplicacy manages there is a major piece of this migration puzzle.

**This is all inside Windows Server though. A lot of things about it are ass-backwards and their filtering system is powerful, but completely unintuitive and has many obnoxious "gotchas." It probably took about 2-3 months of small tweaking to finally get it to a place where I'm not constantly checking it to make sure everything's working properly. Been there for about a year with complicated backup routines and it is holding up just fine. I really wanted it to work, given that it's free, is pretty flexible if you're willing to dive into the more hidden features, and runs on pretty much everything. I let it burn me once, but then 2-3 more times and the failed repair and I was out. Luckily, it was just during testing, but it was about 6 months of testing and a lot of time configuring things to perfectly fit my complicated setup. +1 for multiple backup corruption issues with repairs taking multiple days and one never completing. Bash scripting was relatively new to me when I started so at first I just had a short little raw backup script, but every now and then I put a bit of time into improving it little by little. rsync -a -delete -link-dest will make a new incremental backup with hardlinks to all unchanged files, rather than duplicating them). Of course, sync'ing isn't the same as backup, but rsync does have the capability to do snapshots once you get into the advanced features (e.g. No UI, alas, so its going to be all command-line and text logs, but rsync is veeery robust, and if it does somehow fail anywhere the log output is file-by-file with plenty of detail. If you're syncing several TB or more the first time you do it will take a long time, but after that rsync will only need to update the files that have changed so it's pretty quick.

If you have the time, patience, and/or know-how ( pick 2 :P ) to write a bash script, you can use the User Scripts plugin and add a bash script that periodically runs rsync to sync your files to an external hard drive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed